Deep Cognition and Language Research Lab

Research in Safe, Trustworthy, Multimodal AI

DeCLaRe Lab studies language, multimodal, and interactive AI systems across six themes: Safety, Trustworthiness, Multimodality, AI for Science, Efficiency, and Embodied AI.

About DeCLaRe Lab

Deep Cognition and Language Research

DeCLaRe stands for Deep Cognition and Language Research. The lab was established at the Singapore University of Technology and Design in 2019 by Soujanya Poria, with Navonil Majumder, Devamanyu Hazarika, and Deepanway Ghosal as founding members. In 2025, DeCLaRe Lab moved to Nanyang Technological University.

Today the lab works on methods, benchmarks, and open research artifacts for language, vision, audio, video, knowledge, and action, with the goal of building AI systems that are capable, grounded, interpretable, and robust in settings that require more than benchmark accuracy.

Lab's identity

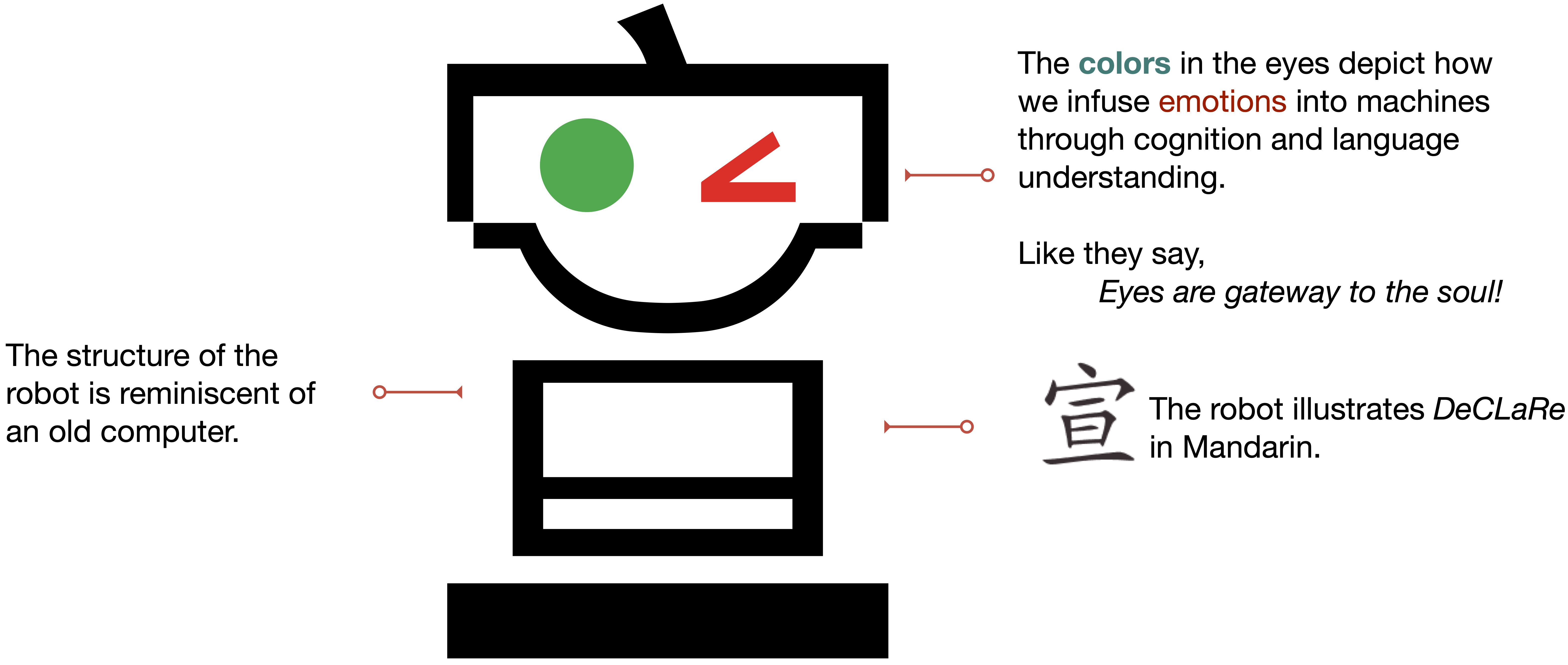

The meaning behind the DeCLaRe logo

The DeCLaRe logo represents intelligence as something that emerges through connection. The robot anchors the identity as an intelligent social agent, while its red and green eyes reflect the affective, cognitive, and linguistic dimensions of AI. The DeCLaRe wordmark is built from geometric forms joined by a subtle internal construction line. This line carries multiple meanings at once: it suggests modular components assembled into a system, agents connected in an AI society, reasoning steps linked across time, memories and concepts interacting, and collaboration between humans, machines, and institutions. Together, the robot and wordmark express DeCLaRe's broader vision: intelligence is not isolated, but constructed, social, and collaborative.

Research themes

Research Areas

Six connected themes that organize the lab's current work.

Safety

Operational safety, red-teaming, refusal behavior, and alignment interventions.

02Trustworthiness

Grounded attribution, reliable RAG, uncertainty, and citation-aware responses.

03Multimodality

Language, vision, audio, and video models for reasoning and interaction.

04AI for Science

Scientific hypothesis discovery, chemistry, and literature-grounded reasoning.

05Efficiency

Data selection, adapters, token pruning, and compact model training.

06Embodied AI

Vision-language-action systems, action grounding, and interactive evaluation.

Projects

Representative Work

Recent work and long-running research lines across the lab's themes.

Safety, red-teaming, and bias

From operational safety and jailbreak evaluation to realignment and bias analysis.

Grounding, attribution, and refusal

Methods that improve factuality, citation quality, and reliability in knowledge-intensive generation.

Multimodal understanding and generation

Long-running work on multimodal emotion, fusion, and text-to-audio generation.

Scientific hypothesis discovery

LLM systems for rediscovering and proposing scientific hypotheses from literature.

Efficient training, merging, and attention

Methods for cheaper adaptation, model merging, data selection, and long-context computation.

Vision-language-action models

Compact embodied models and reward-guided post-training for perception-to-action systems.

Publications

Hot Papers 🔥

Selected recent and highly cited papers.

δ-mem: Efficient Online Memory for Large Language Models

Lightweight online memory through a compact state directly coupled with attention.

OffTopicEval: When Large Language Models Enter the Wrong Chat, Almost Always!

Operational safety and task-boundary evaluation for LLM agents.

Measuring and Enhancing Trustworthiness of LLMs in RAG

Trust-Score, Trust-Align, grounded attributions, citations, and refusal.

MOOSE-Chem: LLMs for Rediscovering Chemistry Scientific Hypotheses

AI for Science through literature-grounded chemistry hypothesis rediscovery.

Data Agent: Learning to Select Data via End-to-End Dynamic Optimization

Efficient training through dynamic data selection.

NORA: A Small Open-Sourced Generalist Vision Language Action Model

Efficient embodied AI and action grounding.

TangoFlux: Super Fast and Faithful Text-to-Audio Generation with Flow Matching

Fast, faithful text-to-audio generation via flow matching.

Research support

Funded Research Directions

A brief view of major active support. The full grant record is maintained separately.

Embodied Foundational Models

Total S$10M; awarded S$3.33M. Research on embodied foundation models and generalist interactive AI.

Toward Generalist Vision Language Action Models

S$1.5M support for vision-language-action models, action grounding, and embodied evaluation.

Detecting, Measuring and Mitigating Hallucinations in LLMs

S$600K support for grounded, reliable, and trustworthy language model generation.